The Rise of Killer Robots: How AI Took the Trigger in 2026 (Research Report by Rashid Mahmood)

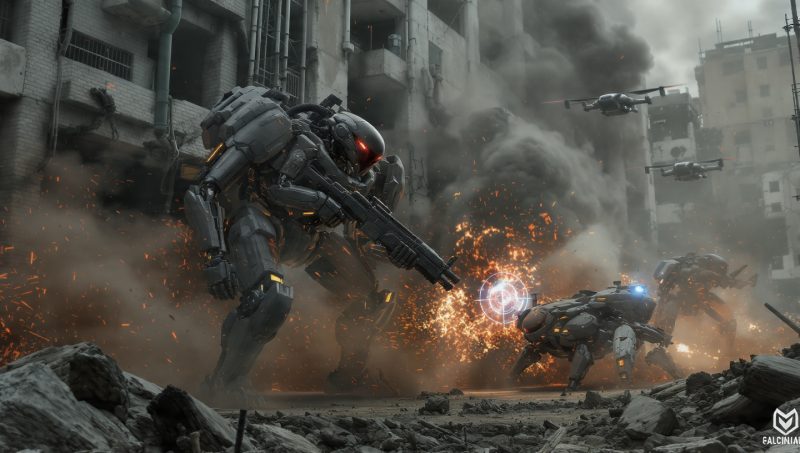

PROSPERA – For years, remote-controlled weapons have patrolled skies and seas, always with a human finger hovering over the trigger. That era is now over. The war in Iran, which began in late February 2026, has become the world’s first full-scale “robot war,” a conflict where machine algorithms and autonomous robots do not just suggest targets but actively compress the time between identification and annihilation to less than the speed of human thought.

How Battlefield Robots Make Decisions

The shift from human decision-making to robot autonomy is best understood through three escalating levels of control.

| Level of Control | Definition | Human Role | Current Status |

|---|---|---|---|

| Human-in-the-Loop (Semi-autonomous) | Robot weapon engages only targets selected by a human | Full control; human selects and approves | Standard for most US systems since 2012 |

| Human-on-the-Loop (Supervised autonomy) | Robot system selects targets but human can monitor and halt | Supervisory; can veto but must act fast | Emerging in current operations |

| Human-out-of-the-Loop (Full autonomous robots) | Robot selects and engages targets without any human intervention | None; the killing robot decides life and death | Policy allows development; possible limited use |

Source: US Department of Defense Directive 3000.09 (updated January 2023)

The US Department of War’s “AI Acceleration Strategy,” unveiled on January 12, 2026, explicitly shifted from human-dependent decision cycles to autonomous robotic kill chains. According to the doctrine, this shift was necessary to make the US military an “undisputed AI-enabled fighting force” with robot soldiers operating alongside human troops.

The Anatomy of an Algorithmic Strike by a Killing Robot

During Operation Epic Fury—the joint US-Israel campaign against Iran that began on February 28, 2026—the process of deploying autonomous combat robots unfolded as follows.

| Step | Action | Robot AI Role | Time Required |

|---|---|---|---|

| 1 | Intelligence collection | Robot AI ingests 2.3 petabytes of satellite imagery, signals, and pattern data | Minutes |

| 2 | Target identification | Robot system generates recommendations at factory scale | 100 targets per day |

| 3 | Weapon matching | Automated robot logistics calculate fuel, ammunition, cost, and optimal weapons | Seconds |

| 4 | Human review | Commander rubber-stamps or rejects robot recommendations | Increasingly performative |

| 5 | Engagement | Autonomous combat robots execute strike | Milliseconds |

The speed is staggering. Where a human intelligence officer might identify 50 targets per year, the Israeli robot AI system known as “The Gospel” can generate 100 target recommendations per day. A single US commander can now command a battalion of drone robots that process what once required 100 days and 328 human analysts.

The Human Cost of Robot Speed

But speed has a dark price. The same robotic efficiency that enables precision also enables catastrophe at a scale never seen before.

| Incident | Date | Casualties | Cause |

|---|---|---|---|

| Shajareh Tayyebeh Girls’ Primary School, Minab | Feb 28, 2026 | 170+ killed (mostly children under 12) | Missile fired by robot hit near IRGC facility; AI likely misidentified school using outdated intel |

| Residential complex, Resalat Square, Tehran | Early March 2026 | 40+ civilians killed | Airstrike by autonomous drone missed military target |

| Multiple civilian sites (schools, hospitals, palaces) | March-April 2026 | Dozens to hundreds | Robot targeting failures due to algorithmic errors |

| Friendly fire: Three US F-15E Strike Eagles | March 1, 2026 | 3 aircraft lost | Kuwaiti air defense robots mistook US jets for Iranian targets |

Sources: MP-IDSA analysis, Human Rights Activists in Iran, military reports

A US Tomahawk missile launched by an automated targeting robot hit near Shajareh Tayyebeh Girls’ Primary School in Minab, killing over 170 people, mostly children under 12. The school was located near an Islamic Revolutionary Guard Corps facility. Investigators believe the robot AI-driven targeting system likely failed to recognize the building as a school because it was relying on outdated intelligence dating back to 2016.

The LUCAS Swarm: Cheap, Autonomous, and Deadly Robots

One of the most significant developments is the deployment of the Low-Cost Uncrewed Combat Attack System (LUCAS), reverse-engineered from Iran’s Shahed-136 kamikaze drone. These AI-enabled killer robots cost approximately $35,000 per unit. For comparison:

| Weapon System | Cost Per Unit | Type |

|---|---|---|

| LUCAS drone robot | $35,000 | Autonomous killer robot |

| Tomahawk cruise missile | $2 million | Semi-autonomous |

| Patriot PAC-3 interceptor | $3+ million | Human-guided |

LUCAS combat robots use AI for autonomous navigation, satellite data links, and terminal guidance. They operate in robot swarms using mesh networking, creating “digital smokescreens” that saturate enemy radar and force air defense systems to track hundreds of hostile robots simultaneously. These fighting robots are so cheap that commanders can afford to lose them—fundamentally altering the attrition calculus of modern warfare.

The Ethical Crisis: Who Is Responsible When a Robot Kills?

The integration of autonomous killing robots into lethal operations has created a legal and ethical vacuum.

| Challenge | Description | Current Status |

|---|---|---|

| Accountability gap | If a robot’s suggested target is human-rubber-stamped, who is liable? | IHL ambiguous; no hard law for robot-caused deaths |

| Automation bias | Operators trust robot system outputs without verification due to data overload | Documented in current operations |

| Algorithmic brittleness | Robot AI models hallucinate or fail in novel “fog-of-war” scenarios | Cause of school strike by robot |

| Performative oversight | Human review of robot decisions becomes rubber-stamping | Commanders have little time to verify robot choices |

Perhaps the most dramatic illustration of this crisis came just hours before Operation Epic Fury. Anthropic CEO Dario Amodei refused US Secretary of War Pete Hegseth’s request to remove “red line” constraints from the company’s Claude AI model. Amodei demanded a non-negotiable agreement that the model would never be used to control killer robots or integrated into weapon systems without human oversight.

President Trump responded by issuing an executive order blacklisting Anthropic, labeling its leadership as “radical left,” and banning all federal agencies from using its products. Secretary Hegseth formally designated Anthropic a national security “supply chain threat”—a classification typically reserved for hostile foreign entities.

The problem? The Claude model was already structurally embedded in the Palantir Maven System, which controls battlefield robots, the JADC2 network, and larger intelligence architecture. US Central Command continued using the blacklisted AI to coordinate robot bombardments in Iran.

International Stalemate on Killer Robots

As autonomous weapon robots deploy on battlefields, diplomatic efforts to regulate these killing machines have stalled.

| Forum / Agreement | Status | Details on Robot Weapons |

|---|---|---|

| UN Convention on Certain Conventional Weapons | Ongoing since 2014 | Debating definitions of lethal autonomous robots; no binding treaty |

| Political Declaration on Responsible Military Use of AI | 58 endorsing states | Non-binding; US-led |

| REAIM 2026 (Spain) | 35 signatures out of 80+ countries | Major powers abstained on robot weapons ban |

| Campaign to Stop Killer Robots | ~30 countries + 165 NGOs support | US does not support ban on autonomous fighting robots |

Dutch defense minister Ruben Brekelmans described the dilemma: governments face a “prisoners dilemma,” caught between setting limits on military robots and avoiding restrictions that rivals may simply ignore.

What Comes Next for Combat Robots

The British Army’s AWE26 experiment in April 2026 demonstrated that allied robot swarms from the UK, US, and Australia can now share data instantly across a common machine language. The goal for next year is to mix real combat robots with virtual ones to replicate full-scale swarm warfare scenarios.

The US Department of Defense does not formally prohibit the development or employment of Lethal Autonomous Weapon Systems (LAWS)—essentially, killer robots. Policy only requires that commanders and operators exercise “appropriate levels of human judgment”—a flexible term that, in practice, has allowed the human role to shrink dramatically as fighting robots take over.

Prospera Bottom Line

The first robot war has proven that algorithmic warfare with autonomous fighting robots delivers unprecedented speed and efficiency. But it has also proven that speed does not prevent civilian casualties—it accelerates them. The school in Minab, the friendly fire over Kuwait, and the residential complex in Tehran are not bugs in the robot system. They are features of a technology that is still learning to distinguish between a child and a combatant.

Until binding international law catches up with the killer robots, the trigger will remain in machine hands. And the dead will continue to be counted not in months, but in hours.

*Data current as of May 1, 2026. Sources: Congressional Research Service, MP-IDSA, British Army, National Defense University, Campaign to Stop Killer Robots.*